Features

You Might Have Missed Eliza, But It’s More Relevant Now Than Ever

It is a given that Big Tech is a part of daily life. It’s in our language: “I’ll just Google it.” “I’ve got Prime, says it’s being delivered tomorrow.” It’s in our hands, in the form of our phones, laptops, and tablets. To imagine a world without the internet is an almost laughable thought. In just the last year alone, millions of people were able to work from home or attend school due to the infrastructure in place. But in Eliza, a 2019 independent game developed by Zachtronics, the idea that technology is inevitable or inescapable is questioned. Does Big Tech make our lives easier? Is it possible to be ethically conscious and still consume something made by a profit-driven corporation? Eliza may not have all the answers but the questions it raises make for one of the most engaging visual novels in recent memory.

“What does a fulfilled life look like?”

Eliza is instantly intriguing. The game is set in a future that feels uncomfortably close to reality, where an AI-driven counseling program called Eliza is growing in popularity. Player character Evelyn Ishino-Aubrey helped design and develop the program three years ago, while she worked for Google-analogue Skhanda. But she stepped back from project management after a personal tragedy. Years away from software development has provided Evelyn with some perspective, and she has a lot of questions: not just about how Eliza has risen in prominence, but about her own role in the program’s development and now, in the present, its apparent widespread use. The world has changed since she stepped away, and anyone who has had to shut themselves off from the world for an extended period of time will instantly relate to her caginess.

Part of Eliza’s success is its depiction of a plausible future. The Eliza program is not standard miraculous sci-fi technology. There is no body modification or magical healing tech; humans aren’t living on the moon or flying through the air in electric hovercars. Instead, Eliza is a computer program that listens to people and analyzes their responses. It doesn’t exactly give advice; instead, it asks the patient a variation of “tell me more about that”, and occasionally recommends medication. But what makes Eliza special in the eyes of the consumer is that clients don’t interact with Eliza through a computer screen. Instead, they schedule time at a counseling center and interface with a proxy, a human being who sits and listens to clients and reads what the Eliza program tells them to. This simple wrinkle makes an enormous difference. Clients who might not feel comfortable sitting in front of a screen and baring their soul to a computer can instead talk to a living, breathing person.

Since Eliza is a visual novel, its success or failure largely depends on the quality of its writing. Fortunately, Eliza thoughtfully interrogates every theme it brings up in its six chapters. There are hardly any plot twists, like something a player would encounter in Danganronpa. Its gameplay is extremely limited, and players make few meaningful choices until the very last chapter of the game. But Eliza soars due to its well-drawn characters and stellar voice acting.

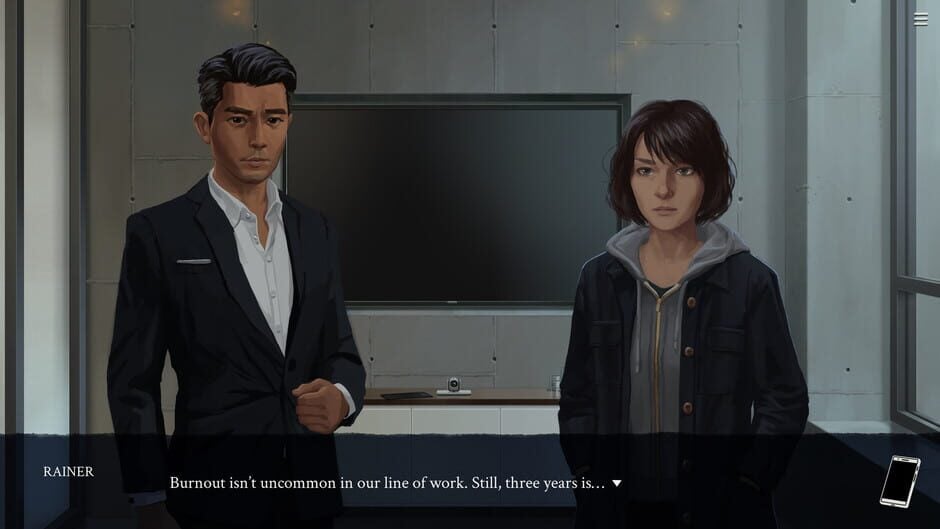

Evelyn may have helped create the Eliza self-help program, but she was hardly alone. In her orbit are Nora, a superstar coder-turned-musician who left Big Tech behind; Soren, a mental health advocate with an idea for a virtual-reality startup and alcohol abuse problems; and Rainer, a cold, calculating upper-management type with inscrutable motivations. Evelyn also interacts with a true-believer counseling center manager and a man who has taken over the development of the Eliza program in her absence. Most importantly, she becomes an Eliza proxy herself and deals with clients using the very program she helped develop. Clients range from disgruntled Skhanda employees to AI skeptics to a lonely old woman who just wants a conversation. Evelyn experiences the front-facing end of the technology she poured years of her life into developing, and seeing her come to grips with what Eliza has become is compelling.

Viscerally Human

Eliza doesn’t shy away from hard questions. The game touches on the baked-in misogyny of the tech sector, the unhealthy culture of crunch, and the everyday anxiety that everyone who has struggled in their early- or mid-30s eventually experiences. Depression and burnout are confronted bluntly, and characters discuss not just their fears for the encroaching future but their fears of the present. Eliza is concerned with human error, and how even the most sophisticated programs lack nuance when it comes to understanding the human experience.

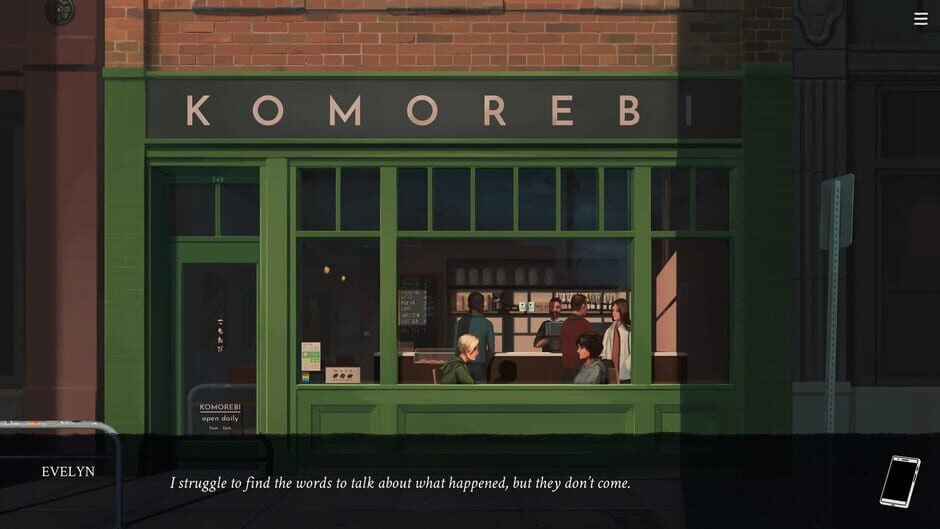

Part of what makes Eliza so compelling are the nuances spread throughout. Early in the story, Evelyn meets up with an old friend at a coffee shop. She notices that the barista spells her name wrong on her coffee cup, a situation that anyone who has ever been to a Starbucks can identify with. It’s human error, the kind that colors almost any interaction. Later in the game, Evelyn meets with Rainer, the executive in charge of pushing Eliza beyond its limits and increasing the scope of the program’s capabilities. His ambitions are evident, and it’s easy to get caught up in Rainer’s idea of what Eliza could become. Even Evelyn is intrigued, but in the back of the player’s mind is the tiny, tossed-off comment about how easily a barista can misspell a person’s name lingers.

In the 1960s, one of the first attempts at artificial intelligence was developed by Joseph Weizenbaum at MIT. The program was named ELIZA and though sophisticated for its time, its programming was basically limited to repeating back information that was fed into it. The creator’s goal was to demonstrate that though a computer could indeed imitate human behavior, that behavior was ultimately superficial. Eliza posits a world where big tech has breached the initial hurdles of getting people to trust their program, and now is grappling with whether or not Eliza can be used for a greater purpose. Eliza’s responses to clients are often laughably inadequate, but it’s not difficult to envision the potential just within reach.

Eliza has serious questions about ethics in engineering. But it doesn’t claim to have all the answers. After a few chapters, Evelyn gains access to a feature called “transparency mode” during her proxy sessions, which lets her access the private messages and emails of a few of her clients. With this extra information, the Eliza program is ostensibly able to make more nuanced recommendations in the future. But is this information ethically obtained? Does anyone ever really read the fine print on any given user agreement? It’s easy to believe that this technology already exists in some form, with the way algorithms have recommendations ready to go for anything from restaurants to YouTube videos. Is this such a bad thing? How much is too much of an invasion of privacy? In Eliza, Rainer is adamant that “anything is possible with enough data,” and this sentiment doesn’t seem to be far from the truth. Though Rainer might appear to be a tone-deaf tech executive, he’s not far off how data is everything to corporations like Amazon and Google.

Visual novels are unique. They rarely showcase complicated mechanics, a hallmark of most video game design. But a visual novel is the perfect form to showcase written content, and in this regard, Eliza is spectacular. The writing is uniformly brilliant, and the character portraits are lushly rendered. It’s a game that feels more relevant with every passing minute, as the influence of social media platforms and the corporations behind them grows. The game may not offer direct solutions to the oh-so-human problems of anxiety and helplessness, but it holds up a mirror to society. Sometimes, just examining an issue can provoke new conversations.

-

Features4 weeks ago

Features4 weeks ago10 games that pay real rewards in 2026 (PC, browser and competitive)

-

Technology3 weeks ago

Technology3 weeks agoBest Software To Boost FPS In Games

-

Features4 weeks ago

Features4 weeks agoTop Mobile Horse Racing Games for Derby Fans This 2026

-

Features4 weeks ago

Features4 weeks agoStudio Ghibli’s Next Anime Finally Revealed, Here’s Your First Look ✨

-

Features2 weeks ago

Features2 weeks agoThis Cozy Isekai Might Become Your Next Comfort Watch… And You Won’t Expect Why

-

Culture4 weeks ago

Culture4 weeks agoHow Global Gaming Friendships Are Shaping the Future of Social Play

-

Features4 weeks ago

Features4 weeks agoWhy Game Review Podcasts Shape How You Shop for Digital Games

-

Features4 weeks ago

Features4 weeks agoAre PS5 AAA Exclusives Losing Their Identity in the Modern Gaming Era?

-

Features4 weeks ago

Features4 weeks agoHow Players Save Time In Grind-Heavy Games

-

Esports3 weeks ago

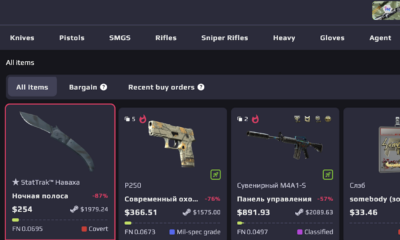

Esports3 weeks agoBest CS2 Skin Marketplaces in 2026: Where to Buy and Sell Skins Safely

-

Features4 weeks ago

Features4 weeks agoHow Indie Games Are Redefining Creativity in the Gaming Industry

-

Features4 weeks ago

Features4 weeks agoWhy So Many Gamers Are Upgrading Their Wishlists After Anime Reviews and Podcasts