Features

The Technology Behind Nintendo’s Consoles: 2001-2007

The early 2000s marked the end of one era and the beginning of another for Nintendo. How did the company’s consoles measure up?

In recent years, Nintendo has been known more for the eccentricity of its hardware more than anything else. From the Wii’s motion controls, to the Wii U’s dual-screen gameplay, and the Switch’s portability, many analyses have focused on the physical design of Nintendo’s hardware while ignoring the systems’ most critical underpinnings: its architectural design and technology. While Sony and Microsoft have been locked in a fierce war over console specifications for the past three generations–pitting each others’ machines in an ever-evolving battle for supremacy–Nintendo has focused on the software.

That’s a shame, though. As the progenitor of the modern games industry and a talented hardware manufacturer, Nintendo deserves a closer look at the technology behind their consoles and what makes them unique. In part two of a three-part series, we dive deep into Nintendo’s design choices for the esoteric GameCube and revolutionary Wii.

Find part one of this series here.

Nintendo GameCube (GCN): Lost at Sea

Licking its wounds after a fifth-generation drubbing at the hands of Sony, Nintendo went back to the drawing board and analyzed their mistakes. Their next move was to address what had went wrong with the N64 and to avoid making the same crippling mistakes. Whereas both the SNES and N64 had been challenging for even the most seasoned of programmers, Nintendo wanted to change that with their upcoming “Dolphin” game system. Announced by Nintendo of America President Howard Lincoln in 1999, Dolphin was marketed as “33% above the projected performance data of [Sony’s] PlayStation 2” and “easily twice as fast as [Sega’s] Dreamcast” for “pretty cheap[,]” bringing the power to, as Shigeru Miyamoto put it, “handle any kind of software developers are interested in creating.”

IBM’s “Gekko” was Nintendo’s first foray into the PowerPC architecture. Credit: Wikipedia.At the core of this greater graphical capability were IBM’s “Gekko” CPU and ATI’s “Flipper” GPU. Similar to IBM’s 64 bit PowerPC 750CXe, used in some Apple G3s, Gekko was an incredibly power-efficient CPU that was hardware limited by its shorter CPU pipelines to a maximum operating frequency of 485 MHz. Chosen for its cool operating temperature and small-form-factor, (which matched with Nintendo’s overall design motif with the GameCube), Gekko was a cheap, easy-to-produce, and power-efficient: three key factors that allowed it to play nice with the GameCube’s real powerhouse, ATI’s Flipper GPU.

After a nasty break-up with Silicon Graphics, Nintendo contracted graphics developer ArtX to develop Flipper in 1998. In 2000, however, ArtX was bought by GPU developer ATI who proceeded to officially ship Flipper. Originally clocked at 200 MHz before being bumped down to 162 MHz when Nintendo adjusted Gekko’s clock rate shortly before launch, Flipper was a potent, efficient GPU that leveraged several technologies (e.g. anti-aliasing) that were substantial advancements on what had been offered in previous console generations.

Part of this advancement came from how Flipper utilized its 3MB of on-die memory. With over half of the GPU’s 51 million transistors dedicated to this 3MB arrangement (composed of a 2MB Z-buffer and a 1MB texture cache), Nintendo leaned heavily on Flipper’s embedded 1T-SRAM to obtain results that were admirable, even when compared to contemporary PCs. While there were some flaws in the general design of the chip, including a reliance on the same 1T-SRAM for the system’s main memory, that showed ATI’s lack of involvement, Flipper ultimately proved a cost-effective, fast GPU that had the powerful tools developers wanted.

“Flipper” was the powerful GPU at the heart of the GameCube. Credit: Wikipedia.The GameCube was Nintendo’s first console with full support for 480p progressive scan component video. While it lacked the Xbox’s support for HD output, the GameCube was capable of a much cleaner image than any Nintendo console before it. Unfortunately, the system’s component cables were built with a proprietary DAC (digital-to-analog converter) that made it nearly impossible for third parties to replicate at the time. It also had several expansion ports–two serial and one high-speed parallel port–that allowed the possibility of expansion in the future. Nintendo would eventually utilize the serial ports for modem and ethernet add-ons that added basic online functionality and the parallel port for the Game Boy Player, which allowed for Game Boy games of any generation to be played on the GameCube. Nintendo would remove the second serial port and component video support when they released a second model of the GameCube, the DOL-101, later on in the console’s lifespan.

Like with the N64, Nintendo’s main problem with the GameCube was in how it approached physical media. While the N64 had opted for cartridges over CDs and had caused a myriad of problems for developers in the process, Nintendo opted to finally adopt an optical format for GameCube. However, still plagued by the piracy worries that had driven the decision to stay with cartridges when designing the N64, Nintendo decided to utilize a variant of the 8cm mini-DVD as their standard. Compared to the PlayStation 2, whose discs held between 4.7 and 8.5 GB, the GameCube’s mini discs could only hold 1.5 GB. While this was rarely a problem for neither first party titles, who based their games around the limitations present in the system, nor for multi-platform games, who generally performed better on Nintendo’s machine anyway, it was an issue because it prevented DVD playback.

Like with the n64, Nintendo’s main problem with the GameCube was in how it approached physical media.

For all their competency in designing every other aspect of the GameCube, Nintendo’s decision to leave out full DVD compatibility sank the GameCube’s chances of success. The PlayStation 2 was released a year earlier and for $100 more, but carried with it several key advantages, including the ability to play full size, retail DVDs, a feature that three years earlier had cost $599 by itself. For a second straight console generation, Nintendo found itself faltering despite having more advanced technology than its closest competitor.

Though the GameCube launched with a plethora of third party support, it soon evaporated and the company was left with a paltry 21 million in hardware sales, less than a third of what they achieved with the NES. By 2004, Nintendo was forced to come up with something different, to engineer a successor to the GameCube that didn’t compete on power but on something else entirely: innovation.

Nintendo Wii: The Casual Connection

With the GameCube clearly a failure by the middle of 2004, Nintendo decided to move on from its flailing console and chart its path for the next generation. Nintendo President Satoru Iwata started giving the first idea of what was to come at GDC in 2005, offering tantalizing hints about Nintendo’s next console. Powered by IBM’s Broadway CPU and ATI’s Hollywood GPU, the “Revolution” would offer backwards compatibility, Wi-Fi support, and would be developer friendly. Instead of focusing on raw power, as the GameCube had, the Revolution would target $250, have a parent-friendly, price tag and go for a completely different path than other next-gens, like Microsoft’s then-upcoming Xbox 360.

Nintendo opted for a blue ocean strategy, going after a market that hadn’t been targeted before: casual gamers who had never bought a console. Their choice of technology emphasized that. IBM’s new Broadway CPU brought an increased clock rate of 729 MHz and ATI’s Hollywood GPU increased clock rates to 243 MHz. That, coupled with 512MB of NAND storage, native support for widescreen, and a library of classic games gave the Revolution a technical advantage over the aging GameCube. However, the technology inside was not its greatest advantage.

ATI’s “Hollywood” chip was interesting, but not the focus with the Wii.Instead, it relied upon innovation. Unveiled in late 2005, the Revolution’s controller turned heads. A singular, TV-remote style, pointer-enabled motion controller with few face buttons, it went headfirst against the leading industry trend, bucking convention and showing the first signs of a new identity under Iwata that focused on a more family-friendly image. Instead of an analog stick on the main controller, it relied instead on the add-on Nunchuck peripheral to provide proper analog input. Later came the name: Wii. The subject of a multitude of jokes, puns, and memes following its reveal, the Wii had all the markings of a non-starter. If Nintendo had competed on power before and lost, what happened now that one of their few advantages were gone?

While it was leagues behind the PS3 and Xbox 360 technologically and lacked little-to-any appeal for hardcore gamers, the Wii succeeded in one critical area: selling its appeal of pure, simple fun. From professional reviewers to soccer moms, it launched to rave reviews, selling out multiple times in its first few years and lighting the retail world on fire by selling over 600,000 units in the US in just over a week and an incomprehensible 101 million units in lifetime sales. The Wii marked the beginning of an incredible turnaround for Nintendo. After over twenty-five years of declining hardware sales, they had finally succeeded at expanding their console market, connecting with casual gamers on a scale never seen before, nor since.

Nintendo opted for a blue ocean strategy, going after…casual gamers who had never bought a console.

Much like the NES, the Wii’s technology wasn’t new or technically impressive, but incredibly innovative. However, unlike the NES, the Wii didn’t just pair aged technology with good games, it innovated on how to play those games, incorporating motion-controls into Nintendo classics, such as Mario Kart, and creating new classics, like Wii Sports, that showed just how flexible Nintendo could be.

However, like all successes, Nintendo’s venture with the Wii was short-lived. By 2011, there were rumblings of Project Cafe, a “Wii 2” that, for better or for worse, would decide the future of Nintendo…

Be sure to check out the final part of this series, as we discuss the technical history behind both the Wii U and Switch.

RELATED

The Technology Behind Nintendo’s Consoles: 2012 – Present

The Technology Behind Nintendo’s Consoles: 1983-1996

-

Features4 weeks ago

Features4 weeks ago10 games that pay real rewards in 2026 (PC, browser and competitive)

-

Technology3 weeks ago

Technology3 weeks agoBest Software To Boost FPS In Games

-

Features4 weeks ago

Features4 weeks agoTop Mobile Horse Racing Games for Derby Fans This 2026

-

Features2 weeks ago

Features2 weeks agoThis Cozy Isekai Might Become Your Next Comfort Watch… And You Won’t Expect Why

-

Features4 weeks ago

Features4 weeks agoStudio Ghibli’s Next Anime Finally Revealed, Here’s Your First Look ✨

-

Features4 weeks ago

Features4 weeks agoWhy Game Review Podcasts Shape How You Shop for Digital Games

-

Culture4 weeks ago

Culture4 weeks agoHow Global Gaming Friendships Are Shaping the Future of Social Play

-

Features4 weeks ago

Features4 weeks agoAre PS5 AAA Exclusives Losing Their Identity in the Modern Gaming Era?

-

Features4 weeks ago

Features4 weeks agoHow Players Save Time In Grind-Heavy Games

-

Esports3 weeks ago

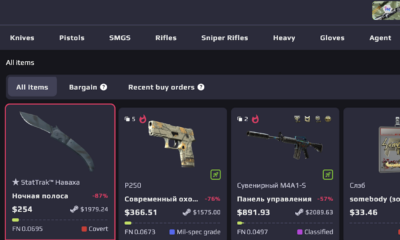

Esports3 weeks agoBest CS2 Skin Marketplaces in 2026: Where to Buy and Sell Skins Safely

-

Features4 weeks ago

Features4 weeks agoHow Indie Games Are Redefining Creativity in the Gaming Industry

-

Features4 weeks ago

Features4 weeks agoWhy So Many Gamers Are Upgrading Their Wishlists After Anime Reviews and Podcasts